High-performance and low power on-board vision-based navigation and GNC for Space landers

Overview

Every autonomous landing in Space (Moon, Mars, etc) has to undergo the infamous “the 10 minutes of terror”, which corresponds to the phase of Entry, descent and landing (EDL), i.e., going from very fast speed (e.g., 13,000 mph) to zero, in perfect sequence, precision and timing… and the computer has to do it all by itself, with no help from the ground.

In order to increase precision of the EDL phase, big effort is currently placed in using vision-based navigation (VBN). The high accuracy achieved by these algorithms is the most important benefit. However, the biggest challenge with this approach is the low frame rate at which images are processed even with the most advanced on-board computers (OBC) that include an FPGA (programmable hardware device that can perform heavy computational tasks with low power consumption), which is around 0.5 frames per second (FPS). This brings a low degree of confidence in the overall navigation algorithm, therefore putting the whole mission at risk of failure.

Furthermore, the software development effort for the EDL phase is extremely difficult and the cost of achieving the necessary levels of determinism and efficiency is very high from the financial and timeline perspective.

Image data processing in Space

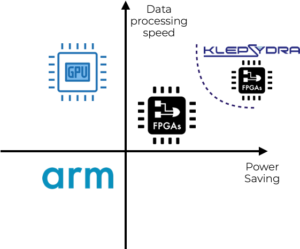

Several data processing solutions have been studied for image processing in-space and for VBN in particular:

- The use of FPGAs, i.e., programable hardware, can reduce power consumption and increase data processing, however high complexity in programming is the main drawback of FPGA.

- GPUs are probably the fastest data processors today. However, large power consumption, thermal load and performance bottlenecks in data transfer to GPU memory reduce their appeal for in-space applications.

- Software solutions in the host computer, are the most attractive solution due their programming simplicity and relatively good performance. However, power consumption is high and data processing performance is often not fast enough.

Klepsydra: more data processing with less power

Klepsydra has developed an advanced software framework for edge computing applications providing best-in-class data processing performance whilst significantly reducing latency and power consumption. This software can be used standalone or combined with FPGA. In either case, Klepsydra outperforms standard image data processing solutions for VBN in Space.

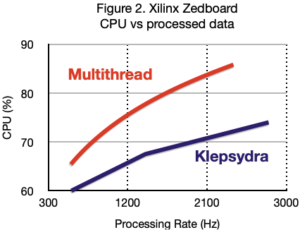

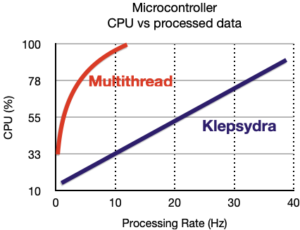

Figure 2 shows comparison of these data processing solutions. These solutions, without a high performance data processing accompanying them, are unable to meet power budget and/or data processing requirements.

Klepsydra Computer Vision Toolbox: multiply on-board image processing speed with less power and more determinism

Furthermore, figures 3 & 4 show proof our substantial increase performance and power consumption reduction comparing Klepsydra with traditional data processing techniques.

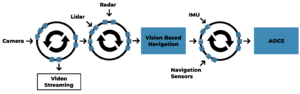

Benefits of developing VBN for Space with Klepsydra

By combining Klepsydra core and KCVT, VBN and guidance, navigation and control (GNC) applications can be developed in a streaming manner as shown in figure 6. These pipelines or streams are not only high performance, deterministic and computer resources efficient, but it is very easy to programme.

Our technology can help Space companies to carry out advanced Space landing applications by speeding up the image processing, simplify development and guarantee no data losses and real-time, therefore increasing the chances of success of VBN in Space while at the same reduce development costs.

Download our community trial

Lorem ipsum dolor sit amet, consetetur sadipscing elitr, sed diam nonumy eirmod tempor invidunt ut labore et dolore magna aliquyam erat, sed diam voluptua. At vero eos et.

Request our professional trial

Lorem ipsum dolor sit amet, consetetur sadipscing elitr, sed diam nonumy eirmod tempor invidunt ut labore et dolore magna aliquyam erat, sed diam voluptua. At vero eos et accusam et justo duo dolores et ea rebum.