Klepsydra for GPU

GPUs (graphics processing units) are specialized devices that are designed to speed up graphics rendering. They are generally used in games and in applications that require intensive use of graphics.

In recent years, they have also been used in a variety of other applications, including edge computing. In edge computing, GPUs can be used to perform a variety of tasks, such as image and video processing, machine learning and artificial intelligence (AI) inference, and data analysis. They are particularly well-suited for this type of task because they are designed to perform many calculations simultaneously and can therefore process large amounts of data quickly.

Using GPUs in edge computing offers several benefits, including:

- Low latency – Because edge computing systems are often located close to the end user, the use of GPUs can help reduce latency, or the time it takes to complete a task.

- Scalability – GPUs can be used to scale edge computing systems to meet the demands of large numbers of users or devices.

In general, the use of GPUs in edge computing can help enable real-time data processing and enable the deployment of AI and machine learning applications at the network edge.

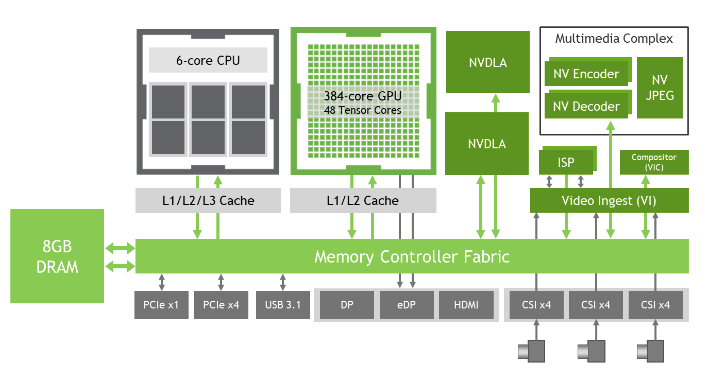

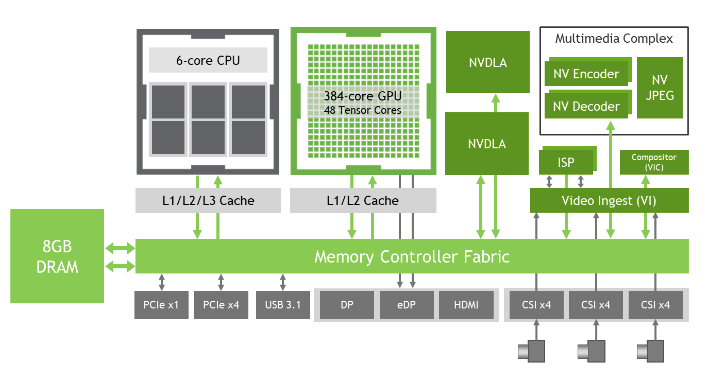

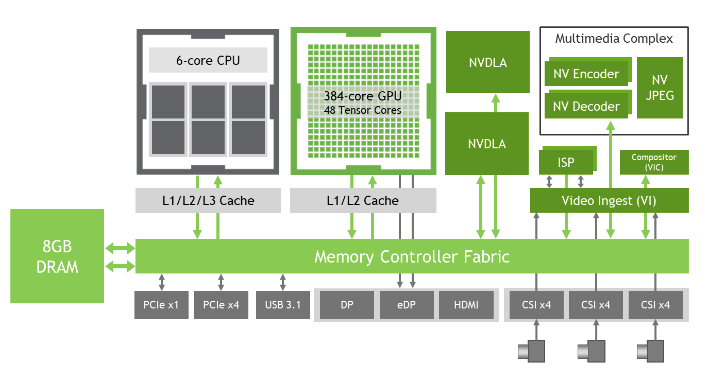

Figure 1. NVIDIA JETSON Block Diagram

GPU challenges

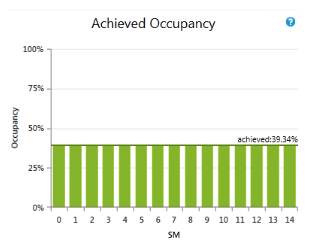

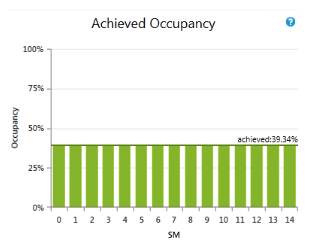

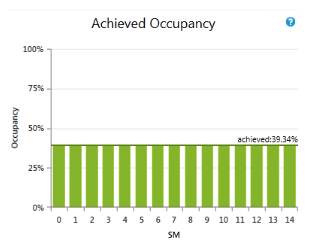

Firstly, it is important to clarify the concept of “occupancy” in

GPU scheduling . Occupancy refers to the number of threads that can be “active” on a GPU at any given time, where a thread is considered active if it can be executed by

GPU Streaming Multiprocessors (SMs) The occupancy of a GPU kernel (a function that runs on the GPU) can have a significant impact on its performance.

Generally, the higher the occupation, the better the performance. The reason is because it allows more threads to be active at the same time, which can increase the amount of parallelism available to the GPU and reduce the amount of idle time for SMs.

On the other hand, increasing occupancy requires more resources, such as registers and shared memory, which can limit the number of threads that can be active at the same time. To optimise the occupancy of a GPU kernel, programmers employ a variety of techniques, such as using more efficient data structures, minimizing the use of registers and shared memory, and carefully designing the organization and synchronization of threads in the kernel.

Figure 2. Example of occupany chart

Klepsydra for GPU

Klepsydra has developed a new software product for GPU programming called Klepsydra GPU streaming. Based on

Klepsydra SDK , the occupancy problem (explained above) is solved very efficiently. Not only that but also is very easy to use. With a simple C++/CUDA, Klepsydra GPU Streaming is compatible with most

NVIDIA boards.