Written by Isabel del Castillo (COO)

A novel approach to Artificial Intelligence On-Board

New generations of spacecrafts are required to perform tasks with an increased level of autonomy. Space exploration, Earth Observation, space robotics, etc. are all growing fields in Space that require more sensors and more computational power to perform these missions.

Sensors, embedded processors, and hardware in general have hugely evolved in the last decade, equipping embedded systems with large number of sensors that will produce data at rates that has not been seen before while simultaneously having computing power capable of large data processing on-board. Near-future spacecrafts will be equipped with large number of sensors that will produce data at high-speed rates in space and data processing power will be significantly increased.

Future missions such as Active Debris Removal will rely on novel high-performance avionics to support image processing and Artificial Intelligence algorithms with large workloads. Similar requirements come from Earth Observation applications, where data processing on-board can be critical in order to provide real-time reliable information to Earth. This new scenario has brought new challenges with it: low determinism, excessive power needs, data losses and large response latency.

In this project, Klepsydra AI is used as a novel approach to on-board artificial intelligence. It provides a very sophisticated threading model combination of pipeline and parallelization techniques applied to deep neural networks, making AI applications much more efficient and reliable. This new approach has been validated with several DNN models and two different computer architectures. The results show that the data processing rate and power saving of the applications increase substantially with respect to standard AI solutions.

On-board AI for Earth Observation

The amount of data produced by a multi-spectral camera prevents real-time data transfer to the ground due to the limitations of downlink speeds, thus requiring large on-board data storage. Several high-level solutions have been proposed to improve this including the use on-board Artificial Intelligence to filter irrelevant or low-quality data and send only a subset of data.

Space autonomy

In a different field, vision-based navigation, there is also a challenge of data processing combined with AI algorithms. One example is rendezvous with uncooperative objects in space, e.g., debris removal. Another example of this is autonomous pinpoint planetary landing, where the number of sensors and the complexity of the Guidance Navigation and Control algorithms make this discipline still one of the biggest challenges in space. One common element to these two use cases, is a well-known fact in control engineering: for optimal control algorithms, the higher the rate of sensor data, the better is the performance of the algorithm. Moreover, communication limitations may result in severe delays in the generation of alarms raised upon detection of critical events. With Klepsydra, data can be analysed onboard in real time and alerts can be immediately communicated to the ground segment.

Inference in Artificial Intelligence

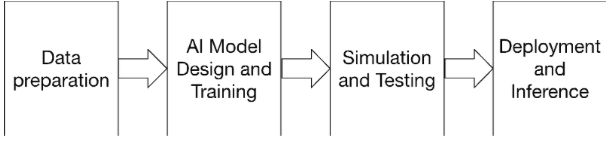

There are several components to artificial intelligence. First, there is the training and design of the model. This activity is usually carried out by data scientists for a specific field of interest. Once the model is designed and trained, it can be deployed to the target computer for real-time execution. This is what is called inference.

Inference consists of two parts: the trained model and the AI inference engine to execute the model. The focus of this research has been solely on the inference engine software implementation.

Figure 1. AI main components

Trends in Artificial Intelligence inference acceleration

The most common operation in AI inference by far is matrix multiplications. These operations are constantly repeated for each input data to the AI model. In recent years, there has been a substantial development in this area with both industry and academia progressing steadily in this field. While the current trend is to focus on hardware acceleration like Graphic Processing Units (GPU) and Field-programmable gate array (FGPA), these techniques are currently not broadly available to the Space industry due to radiation issues and excessive energy consumption for the former, and programming costs for the latter.

The use of CPU for inference, however, has been also undergoing an important evolution taking advantage of modern Floating processing unit (FPU) connected to the CPU. CPUs are widely used in Space due to large Space heritage and also ease of programming. Several AI inferences engines are available for CPU+FPU setups. The results of extensive research in building a new AI inference show that both reduces power consumption and also increases data throughput.

Parallel processing applied to Artificial Intelligence inference

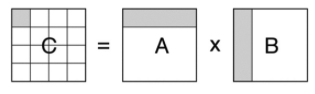

Within the field of inference engines for CPU+FPU, the focus for performance optimisation has been on matrix multiplication parallelisation. This process consists in splitting the operations required for a matrix multiplication into smaller to be executed by several threads in parallel. Figure 2 shows an example of this type of process, where rows from the left-hand matrix and columns from the right-hand matrix are individual operations to be executed by different threads.

Figure 2. Parallel matrix multiplication

Data pipelining

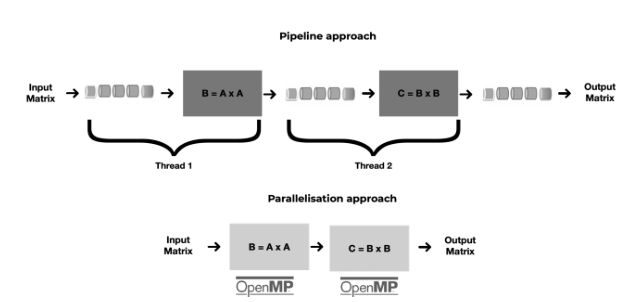

The theoretical advantage of the above approach is its minimal latency. However, there is an emerging alternative approach to parallelisation, which is based in the concept of pipelines. This approach works in a similar manner to an assembly line, where each part of this line corresponds to a complete matrix multiplication. This approach is particularly well suited for AI deep neural networks (DNN). The main advantage is its higher throughput, as it makes pipeline a reliable approach to data processing in resources constraint environment, like Space on-board computers. Pipelining can enable a substantial increase in throughput with respect to traditional parallelisation.

Figure 3. Pipelining vs parallelisation

The threading model

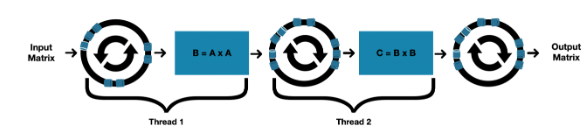

Figure 4. Pipelining approach

This new pipelining algorithm consists of these main elements:

- Use of lock-free ring-buffers to connect the matrix multiplications operations.

- Use of FPU vectorisation to accelerate the matrix multiplications.

- One ring-buffer per thread, meaning that each matrix multiplication happens in one thread.

Combining the concept of pipelining above with lock-free algorithms, a new pipelining approach has been developed. This can process data at 2 to 8 times increased data rate, while at the same time reduce power consumption up to 75%

The Katesu project

The current commercial version of Klepsydra AI has been successfully validated in an ESA activity called KATESU for Teledyne e2v’s LS1046 and Xilinx ZedBoard onboard computers with outstanding performance results. As part of this activity two DNN algorithms provided by ESA were tested: CME and OBPMark

¹Coronal Mass Ejections

https://indico.esa.int/event/393/timetable/#25-methods-and-deployment-of-m

²https://zenodo.org/record/5638577

David Steenari, Leonidas Kosmidis, Ivan Rodriguez-Ferrandez, Alvaro

Jover-Alvarez, & Kyra Förster. OBPMark (On-Board Processing

Benchmarks) –

Open Source Computational Performance Benchmarks for Space

Applications.

OBDP2021 – 2nd European Workshop on On-Board Data Processing

(OBDP2021).

Further references:

Performance Results

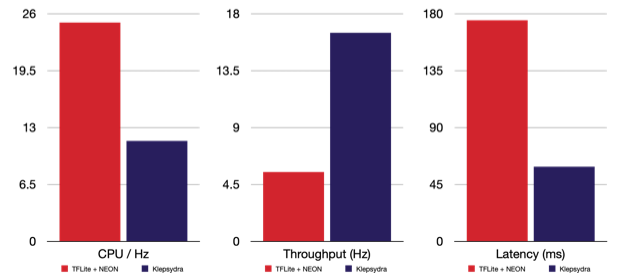

Figure 1.Performance results of CME on LS104

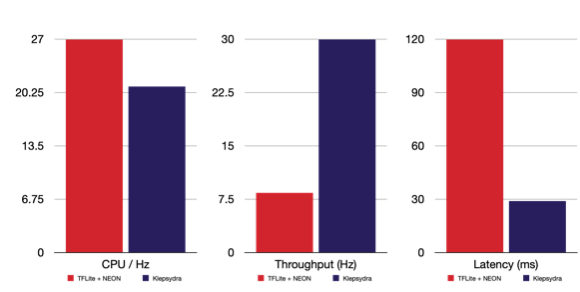

Figure 2. Performance results of quantised CME on LS1046

http://obpmark.org

https://obpmark.github.io/

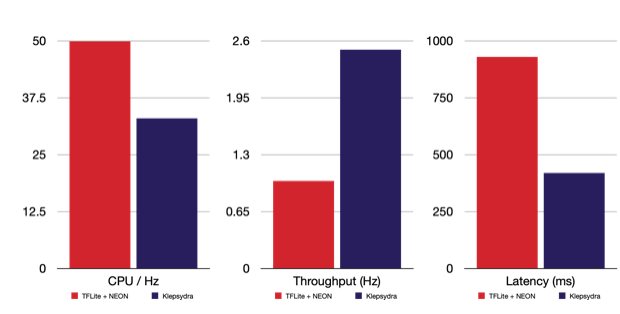

Figure 3. Performance results of quantised CME on ZedBoard

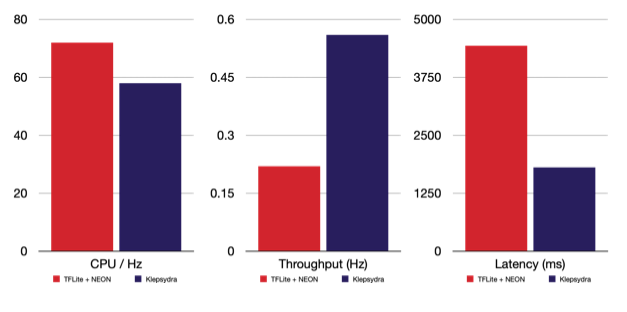

Figure 4. Performance results of OBPMark on LS1046

Conclusions

These performance results of Klepsydra AI applied to Space computers show a substantial increase in speed, up to 4 time with a reduction of CPU usage of up to 50%. Therefore, the benefits for Space system are clear for those areas needing large data processing: Earth Observation, Vision-based Navigation, Lunar Precision Landing and Space Robotics.