Klepsydra AI on the edge. High performance with low power consumption

Description

Overview of Klepsydra AI

Klepsydra AI is a high performance deep neural network engine for edge computers. In the same manner as with standard edge AI solutions, customers can deploy existing or new trained models on the edge using Klepsydra AI.

Moreover, Klepsydra AI offers three main benefits:

- Process up to 5x more data with AI

- Reduce power consumption

- Compatible with main providers of AI software.

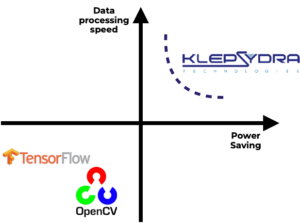

Klepsydra AI has been inspired by high frequency trading technologies. Subsequently, the performance tests show that it outperforms TensorFlowLite and OpenCV in both processing speed and power consumption.

Figure 1. Klepsydra AI positioning in the market.

Core benefits of Klepsydra AI on the edge

Safety and reliability

Real-time edge AI. Above all, Klepsydra AI can process data in real time with low latency.

Predictable edge AI. In addition, Klepsydra AI is substantially more stable, predictable and deterministic than other edge solutions.

Cost

Less hardware cost. With Klepsydra AI, more data can be processed on the same hardware, with less power and less memory and without any cloud computing support.

Compatibility

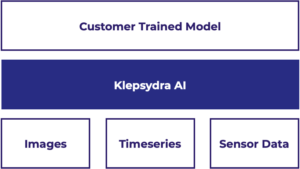

Klepsydra AI is compatible with most AI formats and AI software solutions. It also accepts input data in a variety of formats including images and time series.

Furthermore, Klepsydra can be deploy to several edge computers including, but not limited to, Odroid XU4, RaspberryPi4, Intel NUC, etc.

Figure 2. Klepsydra AI setup.

Applications of AI on the edge

Klepsydra AI can deploy on the edge pre-existing or new models that customers might have developed and trained. Klepsydra AI is used in a large variety of applications including:

- Autonomous robotic vision based navigation

- 3D model analysis of infrastructures in real-time

- Surveillance and security

- Pose estimation and relative navigation

- Data quality check.

Technical specs of Klepsydra AI

Overview

Klepsydra AI is an inference engine for Deep Neural Networks (DNN) aimed at Edge computing applications.

Klepsydra AI has the following modules and APIs:

- Application API. The inference API that includes instantiation of the model and asynchronous inference API, I.e., callback API.

- Model importer API.

- Performance configuration module. This model allows fine performance tuning of the AI model deployment.

Core features

Klepsydra has three main core features:

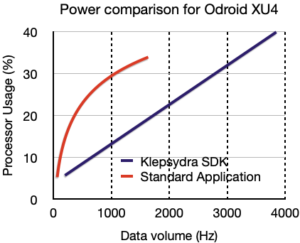

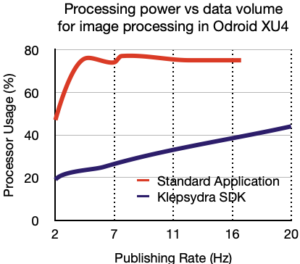

- 2x to 6x increase in data processing capabilities with respect to standard techniques (e.g. OpenCV and TensorFlowLite)

- 30%-50% less power consumption with respect to standard techniques.

- Accepts ONNX, TensorFlow and Caffe sequential models

Figure 3. Power consumption benchmark comparison for AlexNet DNN on RaspberryPi4.

Requirements for AI on the edge

Klepsydra is platform independent with the following technical requirements:

- Operating system present in the target computer

- Target computer with atomic operation set

- C++11 complier for the target computer

- Eigen3 and ONNX software packages.

Klepsydra is supported in a growing number of platforms including:

- Operating system: Linux.

- Processors: ARM (V8, Cortex A7, Cortex A9), x86 64 and 32 bits.

Figure 4. Processed data volume benchmark comparison for AlexNet DNN on RaspberryPi4.

Compatibility features

- Supported languages: C++, C, NodeJS, Python

- Data format: Float matrices, time series and OpenCV matrix objects (cvMat)

- Model format: ONNX, TensorFlow and Caffe.

Request our professional trial

We offer a 90 days trial license including email support. Phone and onsite support and training can be requested.

Please fill the form below and our team will be in contact to provide access to download our products.